All

Getting Started

Tutorials

Customer Videos

Webinar

CopyPress x Orq.ai

Welcome to Orq.ai, your control center for building, testing, and deploying LLM-powered software.

Master AI Model Evaluation with Orq.ai Experiments Module

In this webinar, Kyra Dresen (orq.ai) walks through a practical, end-to-end approach to AI model evaluation using orq.ai's Experiments module - from planning your success metrics to running live experiment comparisons in the UI.

Automate evals & observability with Claude Code + orq.ai

In this webinar, Kyra Dresen (orq.ai) walks through a practical, end-to-end approach to AI model evaluation using orq.ai's Experiments module - from planning your success metrics to running live experiment comparisons in the UI.

Orq.ai Full Platform Demo

This step-by-step tutorial walks you through the complete Orq.ai platform. You'll learn how to manage prompts, run experiments, set up evaluations, deploy to production, build Agents and monitor then using Traces.

𝗥𝗲𝗹𝗲𝗮𝘀𝗲 𝟰.𝟭 𝗳𝗲𝗮𝘁𝘂𝗿𝗲 𝗵𝗶𝗴𝗵𝗹𝗶𝗴𝗵𝘁: 𝗘𝘃𝗮𝗹𝘂𝗮𝘁𝗼𝗿𝗾

𝗘𝘃𝗮𝗹𝘂𝗮𝘁𝗼𝗿𝗾 is a Python evaluation framework designed for running multiple experiments in parallel and measure AI performance directly from your Python code.

𝗥𝗲𝗹𝗲𝗮𝘀𝗲 𝟰.𝟭 𝗳𝗲𝗮𝘁𝘂𝗿𝗲 𝗵𝗶𝗴𝗵𝗹𝗶𝗴𝗵𝘁: 𝗠𝗲𝗺𝗼𝗿𝘆 𝗦𝘁𝗼𝗿𝗲𝘀

𝗠𝗲𝗺𝗼𝗿𝘆 𝗦𝘁𝗼𝗿𝗲𝘀 are persistent storage for agent memories, allowing your agents to maintain context and recall information across conversations Check out a short build to learn when to use Memory Stores and when to go for a Knowledge Base.

Getting started with Agents Studio

Learn how to build your first agent in Agent Studio

Getting Started with MCP Server

Learn how to set up Orq.ai MCP Server in your CLI

How to use human feedback in Orq.ai

Find out how to leverage human feedback to improve system performance

How to build datasets with historical data

Learn how to use historical data to build curated datasets in Orq.ai.

How to create and import test data

Learn how to easily import test data and use it as part of an evaluation workflow in Orq.ai.

How to Build RAG Pipelines in Orq.ai

Find out how you can create a knowledge base in Orq.ai in less than 2 minutes.

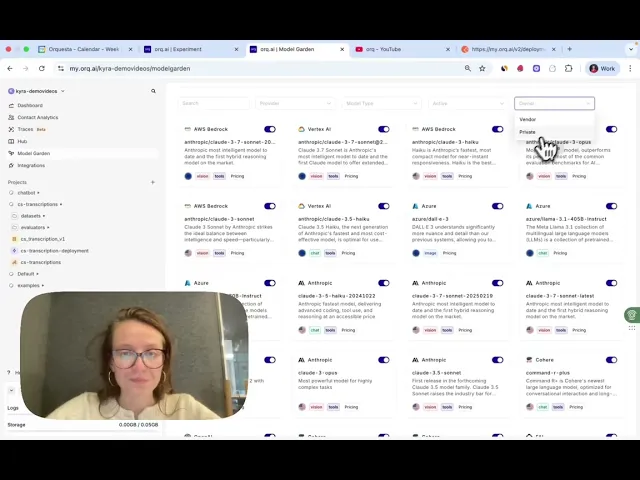

How to Set up the Model Garden

Learn how to enable models from OpenAI, Anthropic, and more for your GenAI use case.

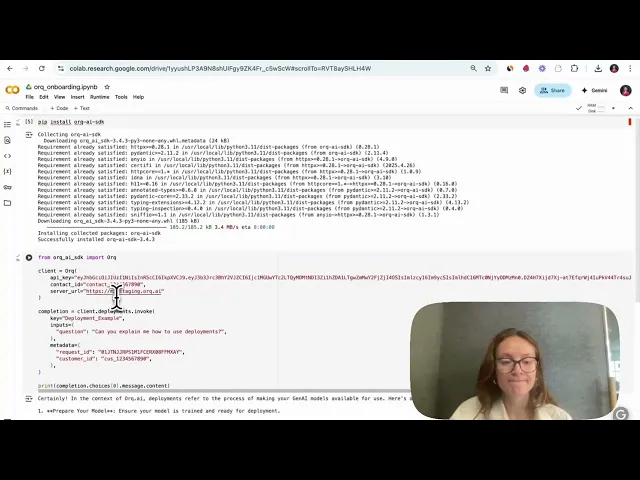

How to run a Deployment

Learn how to set up your first deployment and publish prompt and model changes.

Prompt Library, Fallbacks, & More

Learn how to use our platform to iterate safely on prompt configurations.

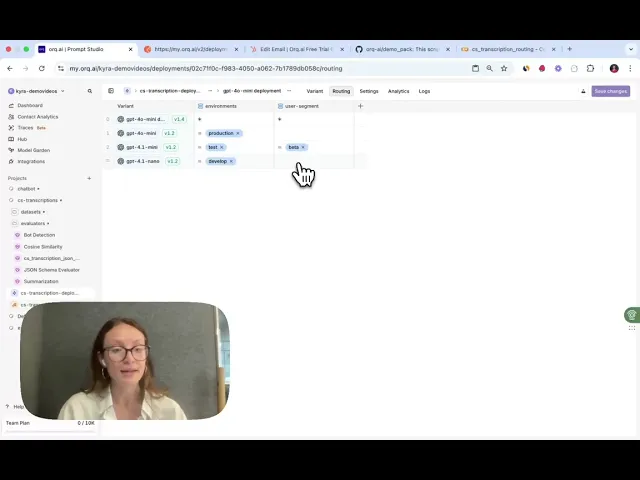

How to set up the Routing Engine

Discover how to do A/B tests, carry out canary releases, and do contextualized routing.

How to run Logs & Traces

Use logs and traces in to gain full visibility into your LLM app’s behavior.

How to run Experiments

Discover how to compare AI models, configure prompts, and evaluate results.

How to Interpret Experiments

Discover how to interpret outputs from experiment to derive actionable insights.

How to Re-run Experiments

Find out how to add a second model to an existing experiment.

How to Evaluate LLMs (Walkthrough)

Learn how to measure LLM-generated output using Orq.ai.

Tidalflow.io x Orq.ai

Learn how Tidalflow delivers LLM-based features using Orq.ai.